NVIDIA Cloud Partners (NCPs), cloud service providers (CSPs), and enterprise customers deploy and run AI workloads on NVIDIA infrastructure by designing pipelines around real-world workload boundary conditions. Simplismart operates as an abstraction and orchestration layer on top of this NVIDIA infrastructure, helping cloud providers and their end-customers manage the complexity of building, tuning, and optimizing these pipelines based on their specific performance, cost, and other deployment needs. NVIDIA and Simplismart will be co-strengthening these optimised inference capabilities on a continued basis. (Read the press release here)

NVIDIA defines NCPs as data center partners that follow NVIDIA reference architecture, combining GPU servers, storage, networking, and software into high-performance accelerated computing environments. On this foundation, NCPs offer their enterprise customers hosted computing and purpose-built services to handle diverse workloads and demanding applications. Simplismart gives a multi-fold boost to such NCP offerings by enabling faster AI operationalization through three key capabilities.

Firstly, Simplismart maintains and optimises AI endpoints with NVIDIA Inference Microservices (NIMs), which can be directly offered by NCPs to AI application builders for powering high-volume Al use cases like multimedia generation, voice agents, document parsers, and more. This unlocks low-latency inference at global scale, while helping teams maintain governance, observability, and performance control across production environments.

Secondly, Simplismart enables rapid scaling and workflow templatization capabilities across generative Al workloads and diverse deployment environments within a unified platform. This enables the NCP to compete with the comprehensive solutioning capabilities of hyperscaler clouds like AWS, Azure and GCP. And lastly, as soon as they launch, popular and highly anticipated AI models become available to NCP customers for testing and deployment. This helps teams stay current with the rapidly evolving AI model ecosystem while maintaining production-grade deployment standards.

“As enterprises move from AI pilots to production, and Indian consumers adopt AI for a variety of daily use cases, we are seeing a significant rise in demand for AI inference. But at scale, both of them are two very different beasts. The former requires control & governance over their infrastructure, while the latter requires ROI at scale. One size does not fit all.

For example, a bank serving millions of daily customers using AI voice agents will be focused on quick response times. While the same bank, when building a document parsing AI workflow, will focus on processing the maximum number of documents at minimum cost. Simplismart’s inference platform is designed to help AI builders navigate these complexities at scale, and we are committed to bringing this game-changing proposition to NVIDIA Cloud Partners.”, said Amritanshu Jain, CEO & Co-founder at Simplismart.

“India’s AI startup ecosystem is primed for acceleration, driven by exceptional technical talent and global ambition,” said Tobias Halloran, Director of EMEAI Startups and Venture Capital at NVIDIA. “NVIDIA is accelerating this momentum by giving founders direct access to accelerated computing, scalable AI infrastructure, and programs like NVIDIA Inception and the NVIDIA VC Alliance - helping startups scale faster and build for global markets. We are excited to work with teams like Simplismart to drive this next phase of AI adoption.”

Context: Inference Takes Center Stage in AI Adoption

Industry indicators show that inference workloads now represent an increasingly dominant portion of AI compute particularly as generative AI shifts from prototype demos to real-world applications such as large-scale conversational agents, automated document processing, multi-media content generation, and agentic AI. In production settings, performance at scale hinges as much on inference stack efficiency as on model capabilities themselves.

This evolution reshapes how teams think about AI infrastructure:

- Latency-critical applications (e.g., real-time voice agents across STT → LLM → TTS pipelines) demand consistent, low-latency inference with deterministic GPU scheduling.

- High-throughput workflows (e.g., batch document processing and structured data extraction) optimize around peak utilization and cost per inference at scale.

- Compute-intensive multimedia content generation (image, video, multimodal diffusion workloads) requires GPU-aware orchestration, memory optimization, and elastic scaling.

- Agentic workflows (multi-step reasoning, tool-calling, RAG pipelines) require low-latency model chaining, stateful orchestration, and coordinated scaling across heterogeneous models.

- Multi-environment deployment (cloud, on-prem, hybrid) requires operational consistency, governance, and performance tuning across heterogeneous infrastructures.

Meeting these distinct operational objectives simultaneously and at scale demands abstraction over the complexity of GPU-accelerated environments. This is the problem Simplismart’s platform is engineered to solve.

Platform Overview: Abstraction, Orchestration, and Performance

At its core, the Simplismart inference platform functions as a comprehensive abstraction and orchestration layer over NVIDIA GPU-accelerated infrastructure, designed to streamline how inference workloads are defined, deployed, and scaled:

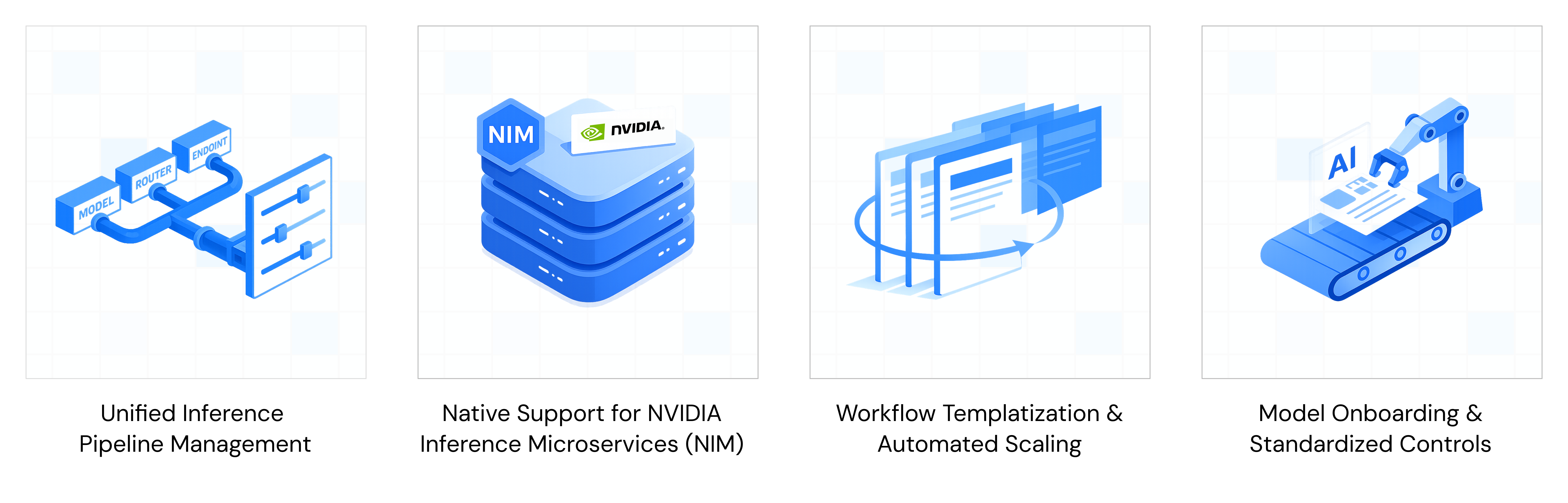

1. Unified Inference Pipeline Management

The platform abstracts low-level concerns such as runtimes, schedulers, and custom scaling policies enabling teams to define pipelines that respect performance SLAs (latency and throughput) alongside cost constraints. This simplifies operational complexity for both cloud providers offering inference services and enterprises hosting critical workloads.

2. Native Support for NVIDIA Inference Microservices (NIM)

By deeply integrating with NVIDIA Inference Microservices (NIM), the platform supports containerized, model-specific microservices that can be deployed as managed endpoints. NIM itself is designed to deliver optimized inference engines and runtime components for models running on NVIDIA GPUs, further enhancing performance and responsiveness.

3. Workflow Templatization & Automated Scaling

The platform comes equipped with predefined workflow templates and automated scaling policies tailored to generative AI use-cases including Voice AI pipelines, document processing, multi-media content generation, and agentic workflows. These templates accelerate time-to-production by reducing repetitive engineering overhead.

4. Model Onboarding & Standardized Controls

New open-source models can be onboarded with day-zero support, optimized for performance, and validated for production within the platform. Built-in observability, version control, and access governance ensure teams can move fast while maintaining reliability and traceability.

Why This Matters: Operational Control Meets Enterprise-Grade Performance

As enterprises move from AI pilots to production, inference demand rises rapidly. Pilot environments prioritize flexibility, while production systems require governance, reliability, predictable latency, and clear ROI at scale. A single approach cannot effectively support both.

This exposes a core tension in AI infrastructure design:

- Control vs. Performance Tradeoffs: Traditional managed inference services often throttle customization. On the other hand, fully DIY infrastructure and orchestration consume engineering cycles without guaranteeing cost efficiency or performance consistency.

- Platformization of Inference: By offering a platform that unifies infrastructure control, pipeline orchestration, and performance optimization, Simplismart empowers teams to balance cost, latency, and throughput according to their specific business and technical priorities.

Implications for Cloud Providers and Enterprises

For cloud infrastructure providers, the Simplismart platform reduces time-to-market for managed inference offerings by providing preconfigured services that application teams can consume without building from the ground up.

For enterprises, whether deploying models across public clouds, private data centers, or hybrid environments, Simplismart’s platform simplifies complexity, enables performance tuning across environments, and helps enterprises deploy AI with predictable cost and reliability.

If you are a cloud provider or an enterprise interested in exploring the Simplismart platform, let's talk